Prototyping & Wireframing: Reducing Development Risk Before Code

The global technology landscape is crowded with the remains of ambitious AI initiatives that promised transformation but delivered disappointment. Across multinational enterprises and fast-growing startups, the pattern repeats with alarming consistency.

Budgets disappear. Engineering cycles stall. Strategic momentum collapses.

Artificial intelligence is not fragile. Strategy often is.

When leadership launches AI initiatives without structure, even the most advanced ai model turns into an expensive experiment instead of a scalable business asset. Hype creates motion, but discipline creates results.

AI failures follow recognizable patterns. These patterns appear across industries, regions, and use cases.

Data quality defines whether any AI solution survives beyond the demo phase.

Inconsistent records, biased samples, missing values, and outdated sources corrupt results at the very beginning. Generative AI amplifies this problem because errors scale instantly across outputs.

Legal, financial, and healthcare examples already show how unreliable data leads to fines, public trust loss, and regulatory exposure.

Artificial intelligence operates on one rule: output quality equals input quality.

Without structured governance, validation, and clean pipelines, ensuring AI accuracy becomes impossible.

High-performing AI organizations treat data as infrastructure, not as a byproduct.

AI adoption often begins with small pilot programs. Full deployment reveals the real price.

Enterprise AI solutions require:

Feature creep accelerates burn rate. One added function inside an ai solution can multiply compute, storage, and maintenance costs.

When financial planning lacks discipline, pilot systems become money traps. Projects stall halfway through implementation.

AI success depends on cost control linked directly to measurable outcomes.

Market hype convinces many leaders that artificial intelligence delivers instant transformation.

In reality, machine learning development services operate through iteration. No ai model reaches optimal performance at launch. Accuracy improves only through retraining, feedback loops, and evaluation cycles.

Generative AI is not plug-and-play.

AI engineering functions as a living system, not a one-time installation.

Projects fail when expectations outpace preparation and infrastructure.

A proof of concept protects investment and credibility.

Without validation, organizations scale ideas that never prove business value. AI systems move into production without testing bias, accuracy, and operational reliability.

A strong proof of concept will:

Skipping this stage leads directly to project failures.

AI does not fail because of code alone. AI fails because people operate in isolation. Business leaders define objectives. Technical teams build systems. Without shared metrics and a common language, results drift away from goals.

Research consistently shows that misalignment between business and engineering undermines AI initiatives. Models may function technically but fail commercially.

AI management requires unified leadership, not isolated departments.

Artificial intelligence as a service delivers value only when teams understand how to apply it.

Without expertise, organizations chase tools instead of solving problems. Engineers pursue complexity where simplicity would deliver faster results.

A machine learning development company provides:

Internal teams often lack these layers at scale.

An AI model does not stay accurate forever. Data drifts. Markets shift. User behavior evolves. Business rules change. When models remain static, relevance erodes quietly until performance collapses.

AI systems operate inside living environments. Inputs never remain fixed. Without continuous tuning, predictions weaken, decision quality declines, and trust disappears.

Ensuring AI reliability depends on structured lifecycle management:

AI success depends on ongoing management, not one-time deployment. A model must evolve as fast as the environment it serves.

AI systems handle sensitive business data, customer records, and operational intelligence. Without strong security controls, risk expands rapidly.

Enterprise AI solutions must operate inside secure and compliant frameworks:

Ignoring security exposes organizations to legal penalties, financial loss, and reputational damage.

Security must be designed into the AI system from the start, not added after deployment. AI without governance becomes a liability instead of an advantage.

Data quality defines whether any AI solution survives beyond the demo phase.

Inconsistent records, biased samples, missing values, and outdated sources corrupt results at the very beginning. Generative AI amplifies this problem because errors scale instantly across outputs.

Legal, financial, and healthcare examples already show how unreliable data leads to fines, public trust loss, and regulatory exposure.

Artificial intelligence operates on one rule: output quality equals input quality.

Without structured governance, validation, and clean pipelines, ensuring AI accuracy becomes impossible.

High-performing AI organizations treat data as infrastructure, not as a byproduct.

AI adoption often begins with small pilot programs. Full deployment reveals the real price.

Enterprise AI solutions require:

Feature creep accelerates burn rate. One added function inside an ai solution can multiply compute, storage, and maintenance costs.

When financial planning lacks discipline, pilot systems become money traps. Projects stall halfway through implementation.

AI success depends on cost control linked directly to measurable outcomes.

Market hype convinces many leaders that artificial intelligence delivers instant transformation.

In reality, machine learning development services operate through iteration. No ai model reaches optimal performance at launch. Accuracy improves only through retraining, feedback loops, and evaluation cycles.

Generative AI is not plug-and-play.

AI engineering functions as a living system, not a one-time installation.

Projects fail when expectations outpace preparation and infrastructure.

A proof of concept protects investment and credibility.

Without validation, organizations scale ideas that never prove business value. AI systems move into production without testing bias, accuracy, and operational reliability.

A strong proof of concept will:

Skipping this stage leads directly to project failures.

AI does not fail because of code alone. AI fails because people operate in isolation. Business leaders define objectives. Technical teams build systems. Without shared metrics and a common language, results drift away from goals.

Research consistently shows that misalignment between business and engineering undermines AI initiatives. Models may function technically but fail commercially.

AI management requires unified leadership, not isolated departments.

Artificial intelligence as a service delivers value only when teams understand how to apply it.

Without expertise, organizations chase tools instead of solving problems. Engineers pursue complexity where simplicity would deliver faster results.

A machine learning development company provides:

Internal teams often lack these layers at scale.

An AI model does not stay accurate forever. Data drifts. Markets shift. User behavior evolves. Business rules change. When models remain static, relevance erodes quietly until performance collapses.

AI systems operate inside living environments. Inputs never remain fixed. Without continuous tuning, predictions weaken, decision quality declines, and trust disappears.

Ensuring AI reliability depends on structured lifecycle management:

AI success depends on ongoing management, not one-time deployment. A model must evolve as fast as the environment it serves.

AI systems handle sensitive business data, customer records, and operational intelligence. Without strong security controls, risk expands rapidly.

Enterprise AI solutions must operate inside secure and compliant frameworks:

Ignoring security exposes organizations to legal penalties, financial loss, and reputational damage.

Security must be designed into the AI system from the start, not added after deployment. AI without governance becomes a liability instead of an advantage.

Data quality defines whether any AI solution survives beyond the demo phase.

Inconsistent records, biased samples, missing values, and outdated sources corrupt results at the very beginning. Generative AI amplifies this problem because errors scale instantly across outputs.

Legal, financial, and healthcare examples already show how unreliable data leads to fines, public trust loss, and regulatory exposure.

Artificial intelligence operates on one rule: output quality equals input quality.

Without structured governance, validation, and clean pipelines, ensuring AI accuracy becomes impossible.

High-performing AI organizations treat data as infrastructure, not as a byproduct.

AI adoption often begins with small pilot programs. Full deployment reveals the real price.

Enterprise AI solutions require:

Feature creep accelerates burn rate. One added function inside an ai solution can multiply compute, storage, and maintenance costs.

When financial planning lacks discipline, pilot systems become money traps. Projects stall halfway through implementation.

AI success depends on cost control linked directly to measurable outcomes.

Market hype convinces many leaders that artificial intelligence delivers instant transformation.

In reality, machine learning development services operate through iteration. No ai model reaches optimal performance at launch. Accuracy improves only through retraining, feedback loops, and evaluation cycles.

Generative AI is not plug-and-play.

AI engineering functions as a living system, not a one-time installation.

Projects fail when expectations outpace preparation and infrastructure.

A proof of concept protects investment and credibility.

Without validation, organizations scale ideas that never prove business value. AI systems move into production without testing bias, accuracy, and operational reliability.

A strong proof of concept will:

Skipping this stage leads directly to project failures.

AI does not fail because of code alone. AI fails because people operate in isolation. Business leaders define objectives. Technical teams build systems. Without shared metrics and a common language, results drift away from goals.

Research consistently shows that misalignment between business and engineering undermines AI initiatives. Models may function technically but fail commercially.

AI management requires unified leadership, not isolated departments.

Artificial intelligence as a service delivers value only when teams understand how to apply it.

Without expertise, organizations chase tools instead of solving problems. Engineers pursue complexity where simplicity would deliver faster results.

A machine learning development company provides:

Internal teams often lack these layers at scale.

An AI model does not stay accurate forever. Data drifts. Markets shift. User behavior evolves. Business rules change. When models remain static, relevance erodes quietly until performance collapses.

AI systems operate inside living environments. Inputs never remain fixed. Without continuous tuning, predictions weaken, decision quality declines, and trust disappears.

Ensuring AI reliability depends on structured lifecycle management:

AI success depends on ongoing management, not one-time deployment. A model must evolve as fast as the environment it serves.

AI systems handle sensitive business data, customer records, and operational intelligence. Without strong security controls, risk expands rapidly.

Enterprise AI solutions must operate inside secure and compliant frameworks:

Ignoring security exposes organizations to legal penalties, financial loss, and reputational damage.

Security must be designed into the AI system from the start, not added after deployment. AI without governance becomes a liability instead of an advantage.

Modern AI platforms do not operate in isolation; they exist within complex, layered ecosystems. Success depends not only on the intelligence of a model but also on the health and stability of the systems around it.

Many AI tools rely on wrapper products or third-party interfaces that handle distribution, user access, and workflow integration. These wrappers often absorb operational costs while paying upstream fees to AI providers. If these layers collapse due to financial strain, user attrition, or poor adoption, the underlying AI platform loses not only revenue but also its distribution network and market reach.

Even industry-leading models are exposed to ecosystem fragility. Dominance in AI depends on economic stability, platform resilience, and end-to-end operational planning, not just algorithmic superiority. Projects fail when ecosystems are ignored, infrastructure is brittle, or dependencies are mismanaged.

Modern AI platforms do not operate in isolation; they exist within complex, layered ecosystems. Success depends not only on the intelligence of a model but also on the health and stability of the systems around it.

Many AI tools rely on wrapper products or third-party interfaces that handle distribution, user access, and workflow integration. These wrappers often absorb operational costs while paying upstream fees to AI providers. If these layers collapse due to financial strain, user attrition, or poor adoption, the underlying AI platform loses not only revenue but also its distribution network and market reach.

Even industry-leading models are exposed to ecosystem fragility. Dominance in AI depends on economic stability, platform resilience, and end-to-end operational planning, not just algorithmic superiority. Projects fail when ecosystems are ignored, infrastructure is brittle, or dependencies are mismanaged.

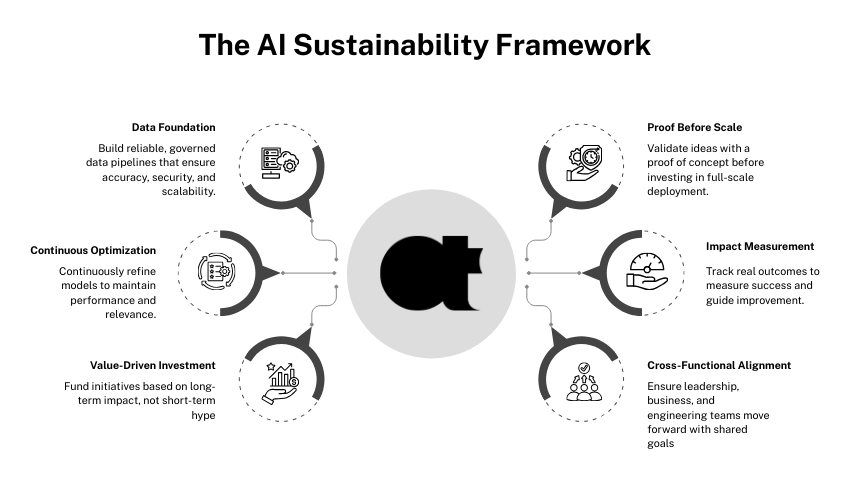

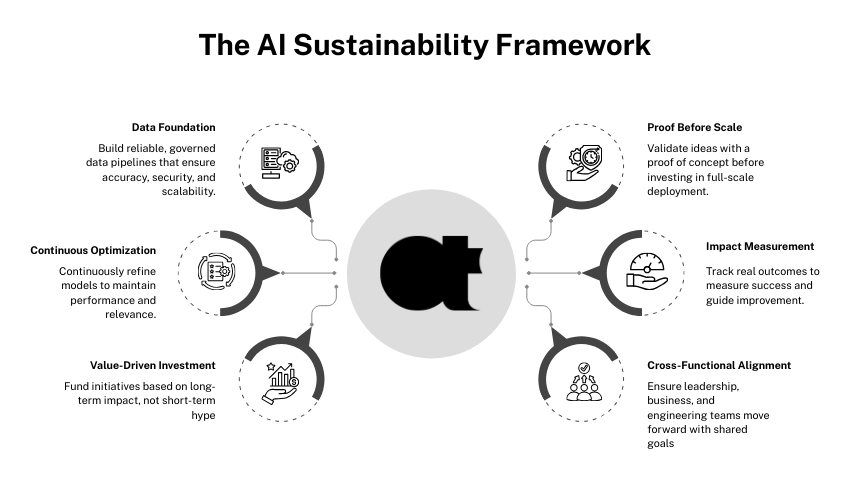

AI project survival depends on structure, planning, and continuous management, rather than shortcuts or chasing hype. Implementing a disciplined framework early ensures both immediate results and long-term scalability.

Ensure data is accurate, consistent, and accessible.

Implement validation, cleaning, and standardization processes to prevent flawed outputs.

Test models under real-world conditions to validate assumptions.

Identify potential pitfalls, data gaps, and operational inefficiencies early.

Create shared objectives and metrics for success.

Encourage cross-functional collaboration to avoid siloed decisions.

Budget for deployment, retraining, and monitoring,not just initial development.

Account for feature expansions, infrastructure scaling, and lifecycle management.

Maintain relevance through retraining, monitoring, and version control.

Track drift, update models with fresh data, and measure performance against real-world outcomes.

Focus on tangible business results instead of theoretical possibilities.

Ensure KPIs capture ROI, accuracy, adoption, and user satisfaction.

Ensuring AI success requires discipline at every layer, from data governance to model lifecycle management, team alignment, and ecosystem oversight. Projects that prioritize structure over shortcuts consistently achieve better adoption, performance, and business impact.

Ensure data is accurate, consistent, and accessible.

Implement validation, cleaning, and standardization processes to prevent flawed outputs.

Test models under real-world conditions to validate assumptions.

Identify potential pitfalls, data gaps, and operational inefficiencies early.

Create shared objectives and metrics for success.

Encourage cross-functional collaboration to avoid siloed decisions.

Budget for deployment, retraining, and monitoring,not just initial development.

Account for feature expansions, infrastructure scaling, and lifecycle management.

Maintain relevance through retraining, monitoring, and version control.

Track drift, update models with fresh data, and measure performance against real-world outcomes.

Focus on tangible business results instead of theoretical possibilities.

Ensure KPIs capture ROI, accuracy, adoption, and user satisfaction.

Ensuring AI success requires discipline at every layer, from data governance to model lifecycle management, team alignment, and ecosystem oversight. Projects that prioritize structure over shortcuts consistently achieve better adoption, performance, and business impact.

Artificial intelligence transforms industries only when strategy, data, and execution align. Project failures follow predictable paths: weak data quality, inflated expectations, missing proof of concept, and misaligned teams.

AI does not fail randomly.

AI fails when structure and disciplined planning are absent. Even the most advanced AI model cannot succeed without strong foundations, proper governance, and continuous management.

Organizations pursuing long-term success in enterprise AI solutions and machine learning development services must treat AI as a complete system, integrating data pipelines, model tuning, monitoring, and team alignment.

In the evolving AI landscape, AtheosTech exemplifies how clarity, disciplined strategy, and intelligence-driven execution turn fragile experiments into scalable, resilient, and impactful solutions.

Most AI project failures stem from poor data quality, misaligned objectives, weak proof of concept, and a lack of structured planning. Even the most powerful AI solutions cannot overcome flawed inputs or fragmented execution.

A proof of concept validates the feasibility, accuracy, and business value of AI models before scaling. It prevents wasted resources and ensures enterprise AI solutions deliver measurable outcomes rather than experimental results.

No. AI models require continuous monitoring, retraining, and evaluation cycles to maintain relevance. Market shifts, evolving user behavior, and data drift make lifecycle management essential for long-term success.

Alignment between leadership, technical teams, and business stakeholders ensures objectives, expectations, and KPIs are consistent. Misaligned teams frequently cause AI projects fail, even if the AI model is technically sound.

AI initiatives can start small but scale quickly in cost due to infrastructure, cloud services, and feature expansions. Proper funding, budgeting, and long-term planning are critical to prevent project failures and ensure ROI.

Most AI project failures stem from poor data quality, misaligned objectives, weak proof of concept, and a lack of structured planning. Even the most powerful AI solutions cannot overcome flawed inputs or fragmented execution.

A proof of concept validates the feasibility, accuracy, and business value of AI models before scaling. It prevents wasted resources and ensures enterprise AI solutions deliver measurable outcomes rather than experimental results.

No. AI models require continuous monitoring, retraining, and evaluation cycles to maintain relevance. Market shifts, evolving user behavior, and data drift make lifecycle management essential for long-term success.

Alignment between leadership, technical teams, and business stakeholders ensures objectives, expectations, and KPIs are consistent. Misaligned teams frequently cause AI projects fail, even if the AI model is technically sound.

AI initiatives can start small but scale quickly in cost due to infrastructure, cloud services, and feature expansions. Proper funding, budgeting, and long-term planning are critical to prevent project failures and ensure ROI.

Adding {{itemName}} to cart

Added {{itemName}} to cart